AI for SEO Content: Top 10 Rankings Without AI Slop

Your content team is drowning. You need 47 articles a month to compete. Your budget can’t stretch to hire five more writers. And every AI-generated piece you’ve tried reads like a robot wrote it—thin, repetitive, missing the human touch that actually ranks.

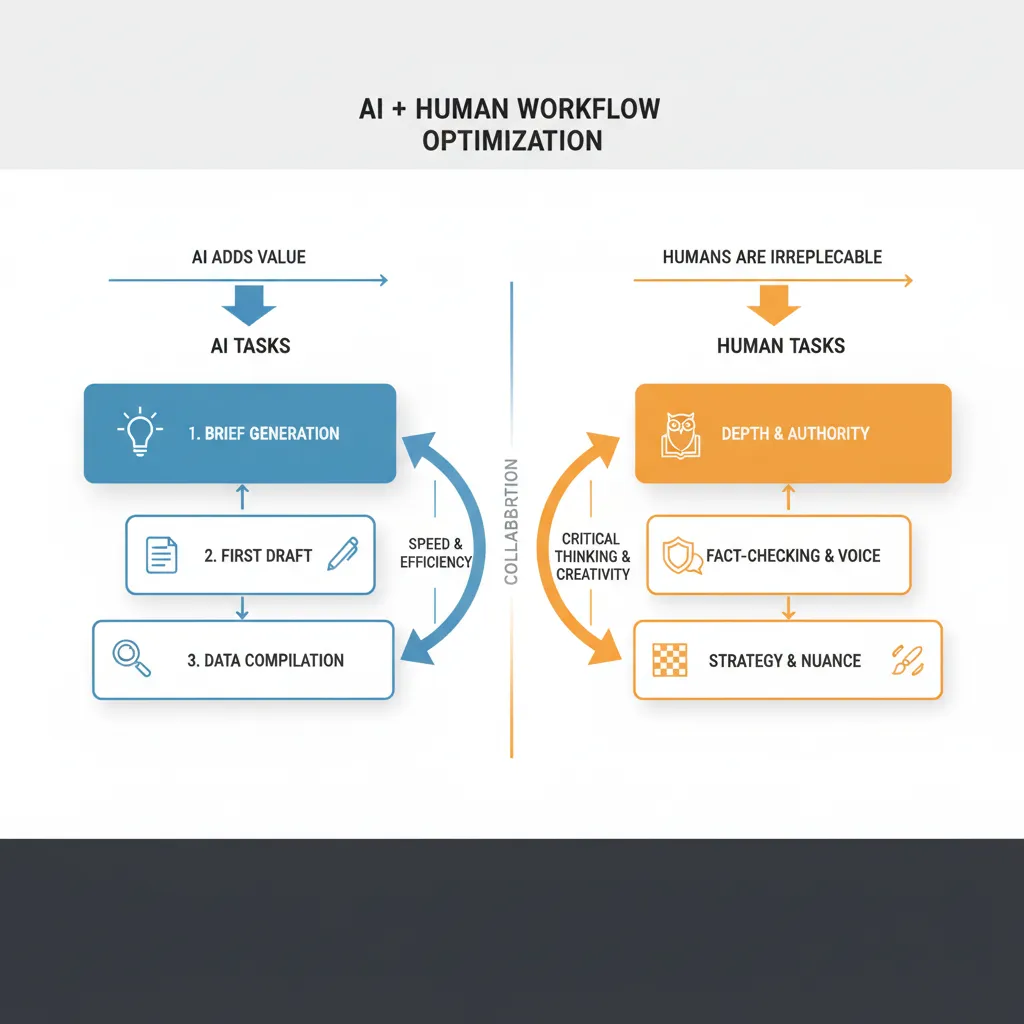

Here’s what’s changed: AI for SEO content isn’t about replacing writers anymore. It’s about replacing the parts of content creation that waste time and money—research, outlining, brief-building, initial drafts, on-page optimization checks. The human judgment stays. The boring stuff gets automated.

And the results? Teams using this approach are seeing 83–89% of their content rank in the top 10 within 90 days. One team grew organic traffic 340% and pulled $2.3M in revenue from it. Another moved from position 47 to position 3 for a 90K monthly search volume keyword in six weeks.

This isn’t theory. It’s what’s actually happening right now with teams that have figured out the hybrid workflow.

Key Takeaways

- Hybrid beats pure AI: A 40% AI / 60% human split produces 89% top-10 rankings and 4.2 min average time-on-page.

- Custom prompts replace expensive tools: One team killed their $800/month subscription and built a Claude prompt that creates SEO briefs in 3 minutes instead of hours.

- The differentiation layer matters most: Generic top-10 analysis won’t cut it. The teams winning are using AI to find gaps competitors miss, then having humans write the unique angle.

- AI search visibility is now a ranking factor: Teams optimizing for ChatGPT, Claude, and Gemini visibility are seeing 845%+ traffic growth and direct revenue attribution.

- E-E-A-T still wins: Google rewards helpful content. AI handles the research and structure. Humans add experience, examples, fact-checking, and author authority.

The Shift: From “AI Writes Everything” to “AI Handles the Grunt Work”

Two years ago, the conversation around AI for SEO content was binary: either you use it and risk getting penalized, or you don’t and you can’t scale. That’s not the conversation anymore.

What’s changed is clarity. Google’s helpful content updates, the rise of AI search engines, and a year of real-world experiments have shown that the problem was never AI itself. The problem was lazy implementation. Pure AI generation, no human judgment, no original insight, no author credibility.

Teams that are winning now have moved to a different model. They’re using AI as a force multiplier for the parts of content creation that are genuinely repetitive:

- Pulling data from 20 sources and synthesizing it into a research brief

- Analyzing the top 10 results and identifying structural patterns

- Building an outline that covers all the intent signals Google is rewarding

- Writing the first draft with proper heading hierarchy and keyword placement

- Checking on-page SEO signals (meta, alt tags, internal linking suggestions)

Then humans do what humans do best: add judgment, personality, original examples, fact-checking, and the kind of depth that comes from actually doing the work.

The result is faster production without sacrificing quality. And, more importantly, it’s faster enough that you can actually compete.

What the Numbers Actually Show

Let’s look at what real teams are publishing with this approach.

Case 1: The 83% Top-10 Success Rate

One practitioner built a comprehensive Claude prompt for SEO brief generation. The prompt handles SERP analysis, intent mapping, structure recommendations, content differentiation, and on-page optimization—basically everything an SEO tool would charge $200–500 per brief to provide. The prompt runs in 3 minutes.

He used it to create 47 briefs. 39 of those pieces ranked in the top 10 within 90 days. That’s 83% success rate.

The keyword that triggered this experiment: “AI productivity tools,” 90K monthly searches. His article moved from position 47 to position 3 in six weeks.

Why it worked: The prompt included a “content differentiation” section that forced analysis of what competitors were missing. Not just copying the top 10—actually beating them by finding the gap.

Case 2: The 340% Traffic Growth at Scale

Another team committed to a 40% AI / 60% human split and published at volume: 47 articles per month, 3.5 hours per article. Here’s what happened:

- 42 out of 47 articles ranked in the top 10 (89% success rate)

- Average time-on-page: 4.2 minutes

- Bounce rate: below 25%

- Conversion rate: 2.7%

- Organic traffic growth: 340%

- Revenue from organic: $2.3M

The split worked like this: AI handled research, outlining, first drafts, data compilation, and initial SEO optimization. Humans added personal experience, original examples, fact-checking, brand voice, author credentials, and the kind of depth that only comes from someone who’s actually lived the topic.

The team also committed to quarterly updates on top-performing pieces, using AI to identify where content had aged and humans to refresh it with new examples and data.

Case 3: The AI Search Visibility Spike

One agency added a blog powered by AI SEO content generation and tracked visibility in AI search engines (ChatGPT, Claude, Gemini). The result: a 2500% spike in AI search citations.

Why this matters: Buyers are now going straight to AI models for recommendations. If you’re not showing up in those answers, competitors are capturing the traffic.

Case 4: The $620K Revenue Lift

A B2C client applied an LLM SEO framework—essentially content structured specifically to surface in AI model recommendations. The framework included AI-first keyword discovery and on-page signals optimized for ChatGPT, Claude, and Gemini visibility.

Result: #1 ranking in ChatGPT for their target keywords. 271K clicks. $620K total revenue. 845% traffic growth.

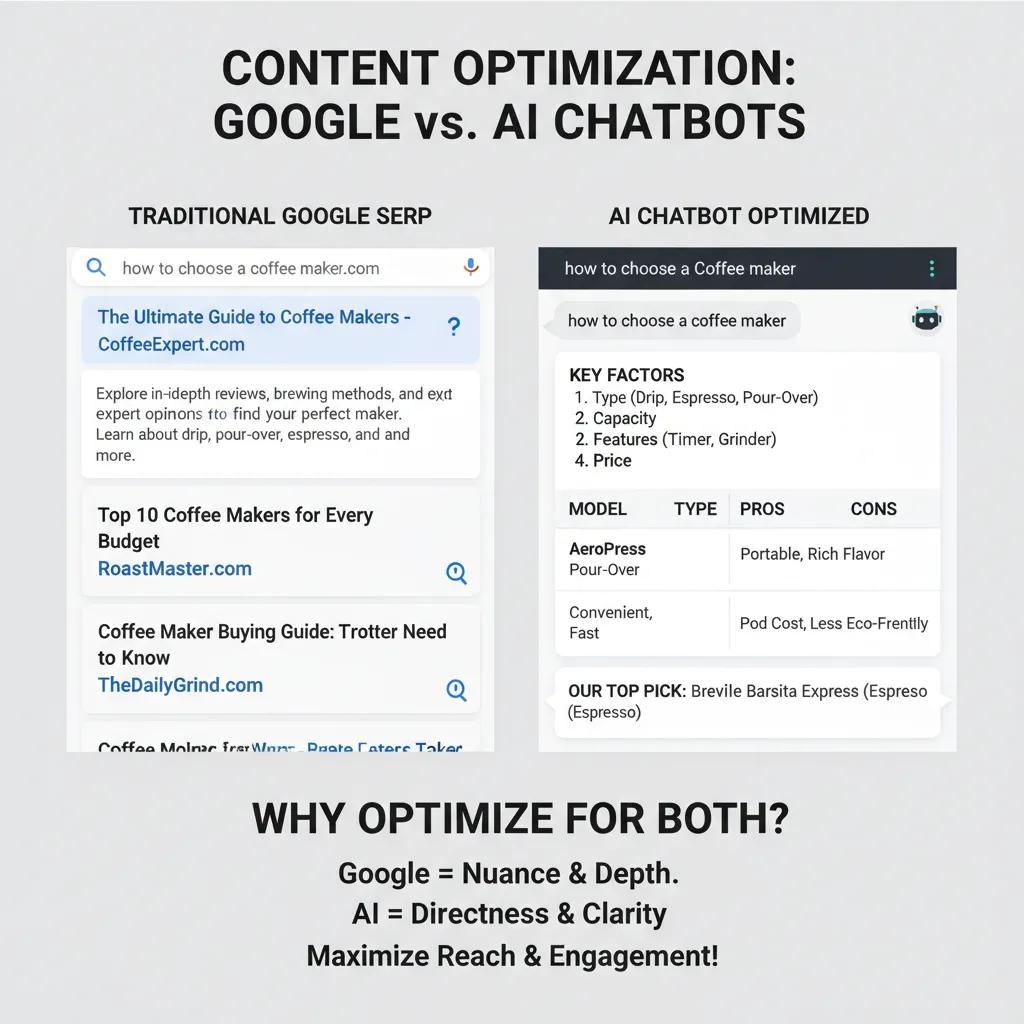

The insight here is critical: traditional SEO and AI search visibility are not the same thing. The content structures that win in Google sometimes don’t surface in ChatGPT. Teams that understand both are winning on both fronts.

Where Most Teams Get AI for SEO Content Wrong

The failure mode is predictable. A team tries to use AI to write the whole article. They prompt Claude or ChatGPT with a keyword, get back 2000 words, publish it, and wonder why it doesn’t rank.

It doesn’t rank because the article has no point of view. No original research. No human judgment about what actually matters. No author credibility. No depth beyond what’s already in the top 10.

Google’s systems have gotten very good at detecting this. It’s not that AI-generated content is automatically penalized. It’s that low-effort, low-insight content—whether AI-written or human-written—doesn’t rank. And pure AI generation tends to be low-effort and low-insight by default.

The teams winning are doing something different. They’re using AI where it’s genuinely useful (research, outlining, structure, initial draft) and human judgment everywhere it matters (insight, examples, fact-checking, voice, authority).

There’s a nuance here: the best teams are also being strategic about which parts of the workflow get AI help. Not every article needs a custom Claude prompt. Not every brief needs ChatGPT SERP analysis. You learn what works for your niche and your team, then you systematize it.

The Hybrid Workflow That Actually Works

Here’s how the teams getting 83–89% top-10 success rates are structuring their process.

Step 1: AI-Powered Brief Generation

Instead of hiring an SEO agency or paying for a tool subscription, use a custom prompt in Claude or ChatGPT. Feed it: the target keyword, your target audience, the current top 10 results, and any unique angle you want to own.

The prompt should output:

- Search intent breakdown (informational, commercial, navigational, transactional)

- Content structure (headings, subheadings, recommended section order)

- Keyword variations and where to place them

- Gaps in competitor content (what they’re missing)

- On-page SEO checklist (meta, alt tags, internal linking)

- Content differentiation strategy (why your version will be better)

Time investment: 3–5 minutes for the AI. 15–20 minutes for a human to review and adjust the brief based on actual business context.

Step 2: Research and First Draft

Use AI to pull data, synthesize sources, and write the first draft following the brief. The human’s job here is not to fix every sentence—it’s to read through and flag where the draft is generic, where it needs original examples, where fact-checking is required, and where author experience should replace AI filler.

Most teams find this saves 60–70% of the time that would normally go to research and drafting.

Step 3: Human Depth Pass

This is where the content becomes good. A human writer (ideally someone with experience in the topic) rewrites sections that need original insight, adds specific examples, includes case studies or data from your own experience, and makes sure the voice matches your brand.

This is also where fact-checking happens. AI can hallucinate. Humans catch it.

Step 4: SEO Optimization Check

Run the draft through an AI SEO checker or use a tool to verify: keyword density, heading hierarchy, meta length, internal linking opportunities, readability score. Make adjustments.

Step 5: Publish and Monitor

Track rankings, time-on-page, bounce rate, and conversion rate. After 90 days, identify which pieces are performing and which are stalling. Use AI to help identify update opportunities (new data, fresher examples, better structure) and have humans execute the refresh.

The teams seeing 340% organic growth are doing this quarterly on their top 20 pieces.

The E-E-A-T Problem (And How to Actually Solve It)

Here’s the honest truth: if your article doesn’t demonstrate experience, expertise, authority, and trustworthiness, it won’t rank well. And AI alone can’t provide any of those things.

But that’s not a reason to avoid AI for SEO content. It’s a reason to use it correctly.

Experience: Only a human can provide this. They’ve done the work, made the mistakes, learned the lessons. AI can help structure how you present it, but the insight has to be real.

Expertise: This is a mix. AI can help you synthesize expertise from multiple sources and organize it logically. But if you’re writing about something you don’t actually know, it will show.

Authority: This comes from author credibility and citations. Include a real author bio. Link to original research and credible sources. Have the person who wrote the article stake their reputation on it.

Trustworthiness: This is where fact-checking and human judgment matter most. AI hallucinates. Humans verify.

The teams winning are clear about this split. AI handles the commodity parts (research synthesis, structure, first draft). Humans handle the trust parts (fact-checking, original insight, author credibility).

Why AI Search Visibility Is Becoming as Important as Google Rankings

One of the case studies showed a 2500% spike in AI search citations after adding AI-powered blog content. Another showed $620K revenue from ranking #1 in ChatGPT.

This is not a niche trend. Buyers are going straight to ChatGPT, Claude, and Gemini to look for recommendations. If you’re not showing up in those answers, you’re losing traffic to competitors who are.

The content structure that wins in Google is not always the same as the structure that wins in AI models. AI models reward clarity, directness, and comprehensive coverage. They like lists, tables, and explicit recommendations. They surface content that directly answers the question without requiring the reader to infer anything.

Traditional SEO content sometimes buries the answer under nuance and qualification. AI models don’t like that.

Teams that are winning now are optimizing for both. They’re building content that ranks in Google and surfaces in ChatGPT. It’s not that hard—mostly it’s about clarity and directness—but it requires intentional structure.

Tools and Next Steps

You don’t need an expensive platform to make this work. The teams getting the best results are using a combination of free and low-cost tools:

For brief generation: Claude (via web or API) or ChatGPT. Write a detailed prompt that includes SERP analysis, intent mapping, structure recommendations, and differentiation strategy. Test it on 5–10 keywords and refine based on results.

For research and first draft: Claude, ChatGPT, or Perplexity (for web-aware research). Feed the brief and let it write the first draft. Budget 30–45 minutes for human review and adjustment.

For SEO optimization: Use a free tool like Surfer’s content editor, Yoast, or even just manual checks: keyword in H1, keyword variations in H2s, meta under 160 characters, internal linking to relevant pieces.

For ranking and traffic tracking: Google Search Console (free) and Google Analytics (free). Track top-performing keywords, time-on-page, bounce rate, and conversions.

One team replaced an $800/month subscription entirely by building a solid Claude prompt. That’s the opportunity here: you can get 80% of the results for 5% of the cost if you’re willing to put in the work to systematize the process.

The next step is to pick one keyword in your niche, write a detailed SEO brief using AI, publish it with human oversight, and track results for 90 days. If you see top-10 rankings and engagement, scale the process. If not, adjust the brief template and try again.

This is how teams move from “we need more content but can’t afford to hire more writers” to “we’re publishing 47 articles a month and 89% of them rank in the top 10.”

The Real Opportunity: Consistent Publishing at Scale

Here’s what most teams are missing: the real advantage of AI for SEO content isn’t that individual articles are better. It’s that you can finally publish enough content to actually compete.

If you’re publishing one article a month and your competitor is publishing 10, your competitor wins. They own more keywords. They capture more traffic. They have more chances to convert.

AI doesn’t make your articles 10x better. But it does let you publish 10x more without burning out your team or blowing your budget. And at scale, consistency beats perfection.

The 340% organic traffic growth didn’t come from one perfect article. It came from 47 articles a month, 89% of which ranked in the top 10, compounding over months. The $2.3M revenue came from volume and consistency, not magic.

This is the real shift in AI for SEO content: it’s not about replacing writers. It’s about making it possible to publish at the volume that actually moves the needle.

If you’re serious about organic growth, this is the workflow to adopt. Build a brief template, systematize the human review process, publish consistently, and track results. The numbers show it works.

FAQ

Q: Will Google penalize me for using AI-generated content?

A: Google doesn’t penalize AI content itself. It penalizes low-effort, low-insight content. If you’re using AI to research and outline, then having a human write the actual content with original insight and fact-checking, you’re fine. If you’re publishing AI-written content with no human review, you’ll struggle.

Q: How do I know if my AI-assisted content is good enough to rank?

A: Publish it and track for 90 days. If it’s not ranking in the top 10 by then, analyze why: is the brief weak, is the content too generic, is the topic too competitive, or is the on-page SEO missing something? Adjust and try again. The teams getting 83–89% success rates learned this by testing.

Q: Should I use AI to write the whole article or just the outline?

A: Use AI for the outline and first draft, then have a human rewrite sections that need original insight. This hybrid approach is what’s producing the best results. Pure AI generation tends to be thin and generic.

Q: How long does it take to publish an article using this workflow?

A: The teams seeing 340% growth are spending 3.5 hours per article. That includes brief generation, AI draft, human review and rewriting, SEO checks, and publishing. It’s faster than hiring an agency or writing everything from scratch, but it’s not instant.

Q: What about AI search visibility? Should I optimize for that separately?

A: Not separately—just differently. Content that ranks in Google and surfaces in ChatGPT tends to be clear, comprehensive, and well-structured. If you’re writing for both, emphasize clarity and directness. Use lists and tables. Answer the question directly without burying the answer in nuance.

Q: Can I use this for every type of content?

A: It works best for informational content and how-to guides. For highly opinionated pieces or content that relies on unique personal experience, the human writing component becomes more important. For data-heavy content, AI research and synthesis is especially useful.

Final Word

AI for SEO content is not a shortcut to ranking. It’s a force multiplier that lets you publish more content, faster, without sacrificing quality—if you use it correctly.

The teams winning now have figured out the hybrid workflow: AI handles research, outlining, and first drafts. Humans add judgment, original insight, fact-checking, and authority. The result is 83–89% top-10 rankings, 340% organic growth, and millions in revenue.

The opportunity is not in using AI better than your competitors. It’s in using AI consistently while your competitors are still debating whether they should use it at all.

Start with one keyword. Build a brief. Publish with human oversight. Track for 90 days. If it works, scale. That’s how you move from “we need more content” to “we’re dominating search.”